0G vs Bittensor: Key Differences Between Decentralized AI Infrastructure and AI Model Networks

As AI and blockchain integration accelerates, decentralized AI is evolving along two distinct paths. One path centers on building collaborative networks around AI models themselves, while the other focuses on developing the foundational infrastructure that powers AI applications.

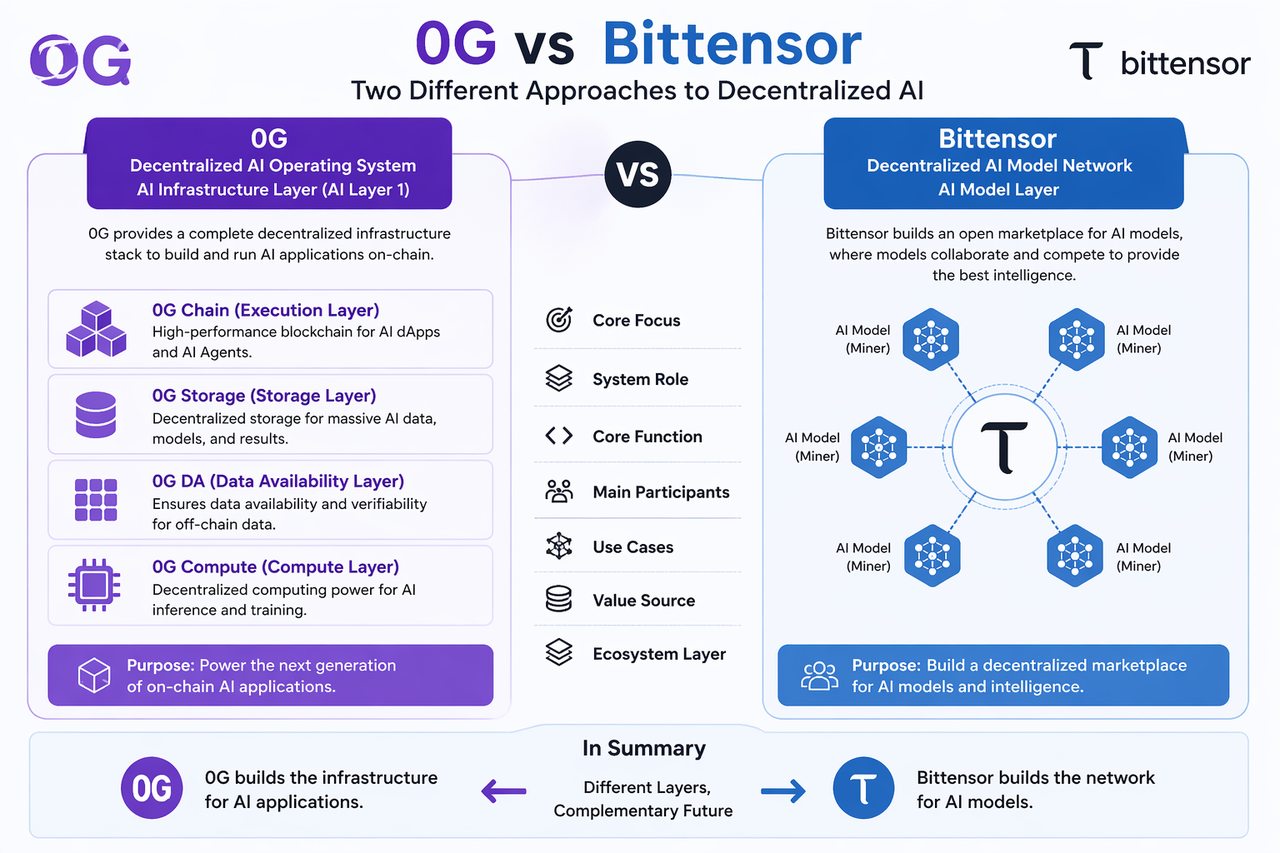

Bittensor and 0G exemplify these two approaches. Bittensor is dedicated to enabling global AI models to collaborate through incentive mechanisms, whereas 0G is designed to provide high-performance, scalable environments for AI applications. This strategic divergence shapes their respective roles within the ecosystem.

0G and Bittensor: Positioning in the AI Ecosystem

0G and Bittensor each occupy distinct layers within the AI ecosystem.

0G serves as the foundational infrastructure (AI Infrastructure Layer), providing operational environments for AI applications, including computation, storage, and data availability. Its mission is to become the AI Layer1, empowering AI Agents to run efficiently on-chain.

Bittensor, by contrast, operates at the protocol layer, connecting AI model providers and validators through incentive mechanisms to create a decentralized AI model marketplace.

In essence, 0G is focused on “running AI,” while Bittensor is focused on “connecting AI.”

Core Comparison: 0G vs Bittensor

From a systems architecture perspective, their fundamental differences are best understood by examining the infrastructure layer.

| Comparison Dimension | 0G | Bittensor |

|---|---|---|

| Core Positioning | Decentralized AI Infrastructure (AI Layer1) | Decentralized AI Model Network |

| Main Objective | Provide operational environments for AI dApps and AI Agents | Build an open AI model collaboration and incentive network |

| System Role | AI Application Infrastructure Layer | AI Model and Inference Network Layer |

| Technical Architecture | Modular: Chain, Storage, DA, Compute | Subnet-driven machine learning network |

| Core Capabilities | Execution, storage, data availability, decentralized computing | AI model training, inference, and contribution incentives |

| Target Audience | AI developers and application builders | AI model providers and researchers |

| Application Scenarios | AI Agents, on-chain AI applications, AI dApps | Decentralized inference services, model marketplaces |

| Value Source | Infrastructure utilization and AI application demand | Model contributions and inference quality rewards |

| Ecosystem Level | AI Infrastructure Layer (Infra Layer) | AI Model Network Layer (Model Layer) |

| Relationship Positioning | Underlying support for AI applications | Network for supplying AI intelligence |

0G is a modular AI Layer1 network, featuring Chain execution, Storage, DA (data availability), and Compute layers—all engineered to support AI workload.

Bittensor, on the other hand, is built on incentive mechanisms, with the subnet network structure at its core, orchestrating contribution and reward distribution among diverse AI models—essentially forming an “AI model economic system.”

0G: The AI Layer1 Infrastructure Network

0G is engineered to deliver a comprehensive AI Infrastructure Stack, enabling AI applications to run natively on-chain.

Its four-layer architecture supports AI Agents and on-chain AI applications, consisting of:

- The execution layer for logic processing

- The storage layer for data persistence

- The DA layer for data validation

- The compute layer for decentralized hash power

In this way, 0G functions as an “AI operating system,” prioritizing computational power and infrastructure integrity.

Bittensor: Decentralized AI Model Network

Bittensor’s primary goal is to establish an open AI model network, fostering competition and collaboration among models through incentives.

Within this system, various models act as nodes, participating in the network and earning rewards based on their contribution quality. This structure aligns more with an AI Model Marketplace than with an infrastructure layer.

As such, Bittensor is focused on “the production and distribution of AI intelligence,” rather than “the operational environment for AI.”

Application Scenario Differences: 0G vs Bittensor

0G is best suited for on-chain AI applications that demand high computational and storage capacity, such as AI Agents, autonomous execution systems, and complex inference tasks.

Bittensor, in contrast, is ideal for AI model training, model sharing, and distributed intelligence collaboration—use cases like model marketplaces and inference service networks.

The two do not directly compete at the application layer, but instead occupy distinct positions within the AI stack.

Ecosystem Role Comparison: 0G vs Bittensor

Within the decentralized AI ecosystem, Bittensor primarily serves at the model layer, supplying AI intelligence, while 0G provides the infrastructure layer, delivering computation, storage, and execution environments.

As the AI ecosystem matures, these systems are likely to become complementary: model networks provide intelligence, infrastructure supplies the operational foundation, and together they enable more sophisticated AI application ecosystems.

Summary

0G and Bittensor represent two divergent paths in decentralized AI development. Bittensor is focused on AI model networks, building an open machine learning marketplace via incentives; 0G is dedicated to AI infrastructure, providing a complete on-chain environment for AI applications.

They do not compete directly, as each occupies a different layer of the AI ecosystem. As AI applications scale, model networks and infrastructure are expected to collaborate more closely, jointly advancing the decentralized AI ecosystem.

FAQs

What is the key difference between 0G and Bittensor?

0G is an AI Infrastructure Layer1 providing computation and storage; Bittensor is an AI model network focused on model collaboration and incentive distribution.

Which layer does 0G belong to in the AI architecture?

0G is part of the AI Infrastructure Layer, specializing in on-chain AI operational environments and computational infrastructure.

What is Bittensor’s core mechanism?

Bittensor connects AI model nodes through incentive mechanisms, enabling models to compete and earn rewards within the network.

Can 0G and Bittensor work together?

Yes, they operate at different layers of the AI stack—one provides infrastructure, the other delivers the model network.

Which is more infrastructure-oriented?

0G is more infrastructure-oriented (AI Layer1), while Bittensor is more application network-oriented (AI Model Layer).

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?